We wrote a full guide on customer discovery conversations — the questions to ask, the structure, the mindset. This is the companion piece. Because knowing what to ask is only half the battle. The other half is knowing what not to ask.

Bad discovery questions don't announce themselves. They feel smart. They feel productive. You walk out of the interview thinking you learned something. Then you realize you just spent thirty minutes listening to a stranger politely agree with your hallucinated product vision.

We've sat in on hundreds of customer interviews over the years. The same poisoned questions show up everywhere — from first-time founders to seasoned PMs who really should know better. Here are the ten we see most often, why they're terrible, and what to ask instead.

1. "Don't you think it would be amazing if you could just [thing we're building]?"

Why it seems smart: You're testing your hypothesis! You're getting customer validation! You're being data-driven!

Why it's terrible: This isn't a question. It's a pitch wearing a question mark as a disguise. You've embedded the answer inside the question and wrapped it in social pressure. Nobody looks a hopeful founder in the eye and says "No, that sounds pointless." What you'll hear is "Yeah, that could be cool" — which is the customer discovery equivalent of "let's get lunch sometime." It means absolutely nothing.

What to ask instead: "Walk me through the last time you dealt with [problem area]. What happened?" Let them describe their reality. If the problem you're solving is real, it'll surface without prompting.

2. "Would you use a product that does X?"

Why it seems smart: You're validating demand before building. Lean startup methodology. Very responsible.

Why it's terrible: People are spectacularly bad at predicting their own future behavior. We've watched someone say "I would absolutely use that" and then not sign up for the free beta three weeks later. Hypothetical questions produce hypothetical answers. The human brain treats "would you" questions as a creative writing exercise, not a commitment. Your interviewee is essentially writing fan fiction about a version of themselves who is more organized, more proactive, and more willing to adopt new tools than they actually are.

What to ask instead: "How are you handling this today? What tools or workarounds are you using?" If they've spent actual time and money solving this problem already, the demand is real. If they shrug and say "it's fine, I guess," your product is a vitamin, not a painkiller.

3. "How much would you pay for something like this?"

Why it seems smart: You're doing pricing research! You'll build a financial model with real data!

Why it's terrible: Congratulations, you've just asked someone to appraise an imaginary product they haven't used in a context they're not currently in with money they're not currently spending. The number they give you is pulled from the same place as their answer to "how many jelly beans are in this jar" — pure vibes. Worse, they'll systematically lowball you because there's no cost to saying a small number and it makes them feel like savvy negotiators. We've seen founders set pricing based on these answers and leave enormous amounts of money on the table.

What to ask instead: "What are you spending today to solve this problem — in money, time, or people?" This tells you what the problem is actually worth to them in their current reality, not in a hypothetical future where your product exists.

4. "What features would you want in a product like this?"

Why it seems smart: You're co-designing with your customer! User-centered design! Very collaborative!

Why it's terrible: You've just handed the product roadmap to someone who doesn't understand your technical constraints, your business model, your competitive landscape, or software design. This is like asking a passenger to design an airplane. They'll describe a flying hotel with a swimming pool and a restaurant — which sounds great until you try to make it leave the ground. Customers are experts on their problems. They are not experts on your solutions. When you ask them to design the product, you get a Frankenstein feature list that makes nobody happy.

What to ask instead: "What's the most frustrating part of how you handle this today? What breaks?" Understand the problem deeply enough that you can design the solution. That's your job.

5. "On a scale of 1 to 10, how important is [problem] to you?"

Why it seems smart: Quantitative data! You can put this in a spreadsheet and make a chart!

Why it's terrible: Nobody gives anything below a 7. Seriously. We've tracked this. In hundreds of interviews, we can count on one hand the number of times someone said a number below 6. The social dynamics of sitting across from someone who cares about a topic make it nearly impossible to say "eh, 3 out of 10." So everything is a 7, 8, or 9, and you end up with a spreadsheet full of meaningless high numbers that tell you exactly nothing about relative priority. It's the discovery equivalent of asking everyone at a wedding if the food is good.

What to ask instead: "Tell me about the last time this problem cost you time or money. What happened?" Stories with real consequences beat arbitrary numbers every time. If they can't think of a specific instance, the problem probably isn't that important — regardless of what number they would have given you.

6. "We're also thinking about adding AI — would that be valuable?"

Why it seems smart: You're testing a strategic direction! You're being forward-thinking!

Why it's terrible: In 2025, asking "would AI be valuable?" is like asking "do you like efficiency?" The answer is always yes. Nobody is going to tell you they prefer things to be slower and less intelligent. You've asked a question with only one socially acceptable answer. This is the discovery equivalent of asking "would you like more money?" You've learned nothing except that your interviewee is polite and alive. The same applies to any buzzword du jour — swap in "blockchain" in 2021 and you'd have gotten the same enthusiastic nods.

What to ask instead: "Walk me through the most tedious or repetitive part of your workflow right now." If there's a real automation opportunity, it'll emerge from the specifics of what they're doing — not from asking if they like magic.

7. "My mom/roommate/college friend thinks this is a great idea — what do you think?"

Why it seems smart: You're getting a second opinion! You're validating across different perspectives!

Why it's terrible: Let us be direct: asking friends and family for product feedback is like asking your dog if you're a good cook. The answer is always yes, and it has nothing to do with the food. Your mom loves you. Your roommate has to live with you. Your college friend owes you for that one time. None of these people will tell you your idea is bad, even if it's objectively terrible. Even worse, leading with "other people think it's great" turns the question into a social compliance test. You've essentially said "the correct answer is yes — do you agree?"

What to ask instead: Talk to strangers who actually match your target customer profile. And don't tell them anything about what other people think. Ask: "How do you deal with [problem] today?" If they don't have the problem, they're not your customer, and their opinion — positive or negative — is irrelevant.

8. "What do you think about our product?"

Why it seems smart: Open-ended! Inviting honest feedback! Very non-leading!

Why it's terrible: This question is so broad that the answer is guaranteed to be useless. It's the equivalent of "how's everything?" at a restaurant — you'll get "fine" or a rambling monologue that covers everything and reveals nothing. People don't know what you want to hear, so they'll default to either polite generalities ("it looks really clean") or focus on something trivial ("the blue button should be green"). You'll walk away with a grab bag of surface-level reactions and zero actionable insight.

What to ask instead: "Last time you used [product/prototype], what were you trying to accomplish? Did you get there?" Anchor the conversation in a specific task and a specific outcome. That's where the useful feedback lives.

9. "If we could do this for half the price of your current solution, would you switch?"

Why it seems smart: You're testing competitive positioning! Price sensitivity analysis!

Why it's terrible: You've just asked someone if they'd like to save money. Stop the presses. Of course they'll say yes. But switching costs are real, inertia is powerful, and "would you switch" in a hypothetical conversation has approximately zero predictive value for actual switching behavior. We've watched teams spend months building a cheaper alternative to an incumbent based on these answers, only to discover that nobody switches because the switching cost (retraining, data migration, workflow disruption) outweighs the savings. Your interviewee forgot to factor in all that pain when they casually said "sure, I'd switch."

What to ask instead: "Tell me about the last time you evaluated switching from your current tool. What happened?" If they've actually gone through a switching evaluation, you'll learn what mattered. If they haven't, that tells you the pain isn't bad enough to trigger action — which is arguably more important information.

10. "We're launching soon and spots are limited — can I put you on the early access list?"

Why it seems smart: You're creating urgency! Building a waitlist! Getting commitments!

Why it's terrible: This isn't discovery — it's sales cosplaying as research. The moment you introduce artificial scarcity into a discovery conversation, you've destroyed the entire dynamic. Your interviewee is now performing as a buyer, not reflecting as a user. They're making a low-cost social commitment ("sure, add me") that they have no intention of following through on. We've seen "waitlists" of 500 people convert at 2% because every single signup was a polite person avoiding an awkward moment. If your discovery interview ends with a sales close, you did not do discovery.

What to ask instead: "If we built a first version of this, would you be willing to spend 30 minutes giving us feedback on it?" This tests actual willingness to invest time — which is a much better proxy for real interest than adding an email to a list. And it keeps the relationship in research mode, where it belongs.

The Pattern to Remember

If you look across all ten of these questions, the underlying failure is the same: they let you hear what you want to hear instead of what you need to hear.

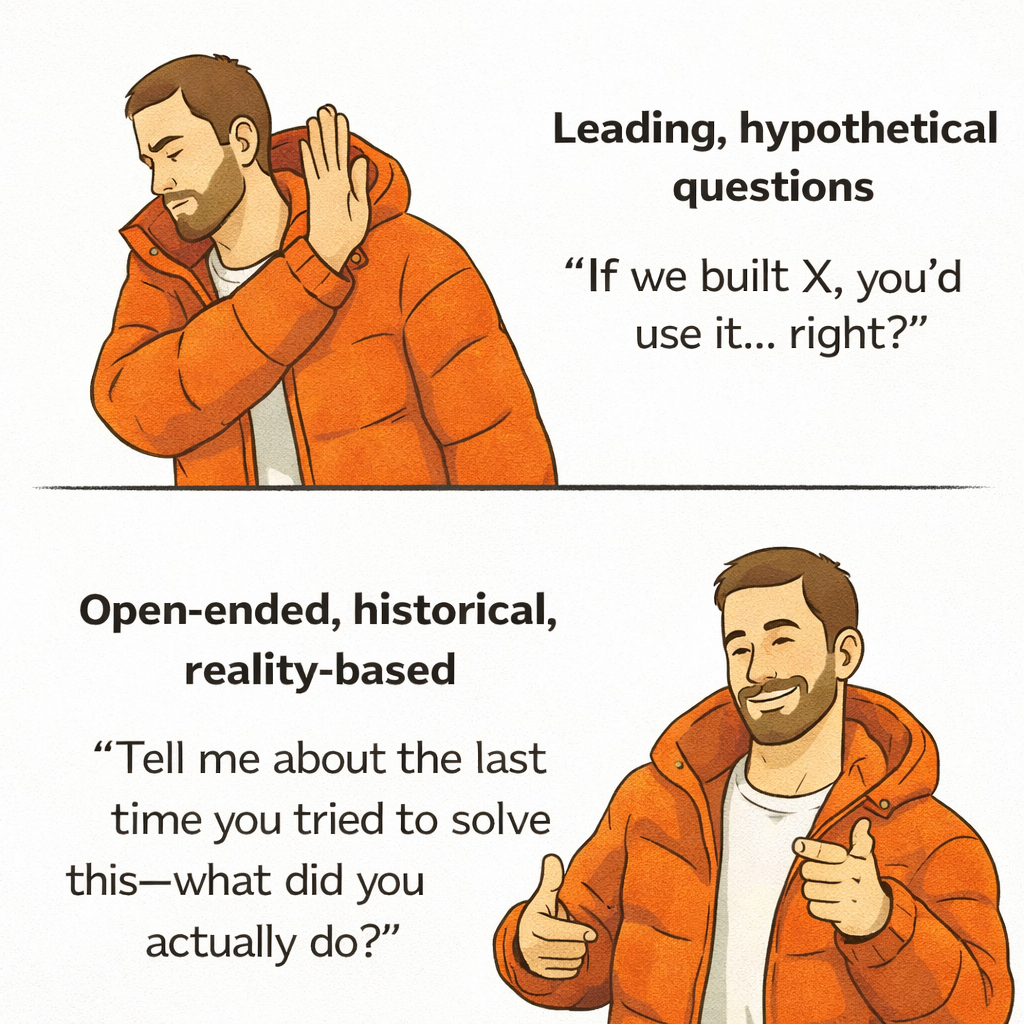

Good discovery questions share three traits:

- Past-tense. "Tell me about the last time..." beats "Would you ever..." every single time.

- Open-ended. If your question can be answered with yes or no, rewrite it.

- About their reality, not your product. You're there to understand their world. Your product is not part of their world yet.

The best interviews feel like a conversation where you barely talked. You asked a few good questions, then followed the thread wherever it went. You learned things you didn't expect. You left with more questions than you started with.

If you left the interview feeling validated, something probably went wrong. If you left feeling confused and curious, you're doing it right.

For the full playbook on how to structure these conversations, check out our guide to customer discovery conversations. It covers the questions, the structure, and the mindset — everything on the "do" side of this equation.

Frequently Asked Questions

What makes a customer discovery question 'bad'?

A bad discovery question is one that biases the answer before it's given. This includes leading questions ("Don't you think..."), hypothetical questions ("Would you..."), and questions that embed social pressure to agree. The telltale sign: if there's only one socially comfortable answer, you've written a bad question. Good questions make it equally easy to give you good news or bad news.

How do I know if I'm asking leading questions?

Record your interviews and listen back. It's painfully obvious in hindsight. Look for questions that start with "Don't you," "Wouldn't it be," "Isn't it true that," or any phrasing that reveals the answer you're hoping for. Another test: could a reasonable person answer your question with the opposite of what you want to hear without feeling awkward? If not, it's leading. Having someone outside your team review your question script before interviews is one of the simplest ways to catch this.

Can I salvage an interview where I asked bad questions?

Sometimes. If you realize mid-interview that you've been leading the witness, pivot to open-ended, past-tense questions: "Let's step back — tell me about your day-to-day when it comes to [problem area]." Let them talk without steering. The second half of the conversation can still produce genuine insight. The bigger issue is if you've already anchored their thinking with your earlier questions. In that case, treat the interview as practice and schedule another conversation with a different person using better questions.

Related reading:

- How to Talk to Customers: A Guide to Customer Discovery — The workshop-tested playbook for conversations that drive PMF.

- Stop Vibe-Coding Your Way to Nowhere — Why shipping fast without customer understanding is the most expensive mistake.

- From Assumptions to Evidence — Why evidence-based product decisions win.

Ready to Transform Your Customer Interviews?

Join product teams using AI to turn customer interviews into actionable insights.

Start Free Trial